Like many, I got addicted to Business Insider’s “So Expensive” series. I thought about the whole concept of luxury, and then I wondered why software is so expensive. Are people who write software similar to tailors, customizing well-crafted products for a wealthy few? Not really. Someone named Kurt on this Quora thread wrote that software is very cheap to replicate but very expensive to produce, such as in a video game.

It SEEMS to logically track that software as an industry has been lucrative in the past due to high margins and high scalability, ie one product like the Uber App or Facebook could scale out to millions of people without having to manufacture relatively expensive materials per customer. The truth, surely, is more nuanced – take, for instance, the very high percentage of startups that fail, or tech companies that trade at high P/E ratios (well, fine…someone could rightly call me out for pointing that out). The reason why the tech industry has been so lucrative in the past COULD be high scalability or high margins, or it could just be…

Vibes? People purchase stocks that they think will be more valuable over time.

And now there’s generative AI. Why CAN’T software be like a luxury service, with people devising customized software for high prices? It seemed like a pretty good idea for a blog post.

Until it didn’t.

Claude and Critical Thinking

ThePrimeTime hates Claude Code, calling them an unethical and dishonest service that constantly employs false advertising while simultaneously releasing a shitty product. I like them, from what I’ve seen, but whether a business produces a good product and whether the business as a whole is ethical are two different questions – and ThePrimeTime also points out that the value is inflated, so over time the quality could degrade. Before I paid for a subscription, I found their ads pretty annoying. If Claude is really so great, I thought, why do they need to pay all these tech influencers to glaze their product?

What I have used Claude for, however, is exactly the kind of thing experts advise against (source: Claude). I had it analyze biometric data and devise diet plans. I had it provide me with financial insights. Claude Chat, in my experience, does not hallucinate nearly as often as the free edition of ChatGPT. The concern? It’s still fundamentally built on the same architecture. The numbers it provides me with COULD BE wrong, and it would be very difficult to verify. So Claude Chat mouths off the exact same warning experts provide: Don’t actually use it as a doctor, financial advisor, or therapist.

Once the financial advisors, doctors, and therapists start relying on Claude, we will have gone full AI apocalypse.

But what I do find kind of interesting here is the angle of critical thinking. Sometimes these AI tools seem to be a shortcut for the kind of thinking we could have done in the days before electronics, such as…I don’t know…a quiet walk, contemplation under an oak tree, what have you. What am I going on about? If I have a really important life decision, like whether to have a second child or whether to move back to San Francisco, then generative AI probably isn’t the ideal tool. The answer would be difficult to verify. My life circumstances are extremely unique. The best thing generative AI could do is provide me with the average advice of 1000 people in similar circumstances, but even that with require a degree of critical thinking.

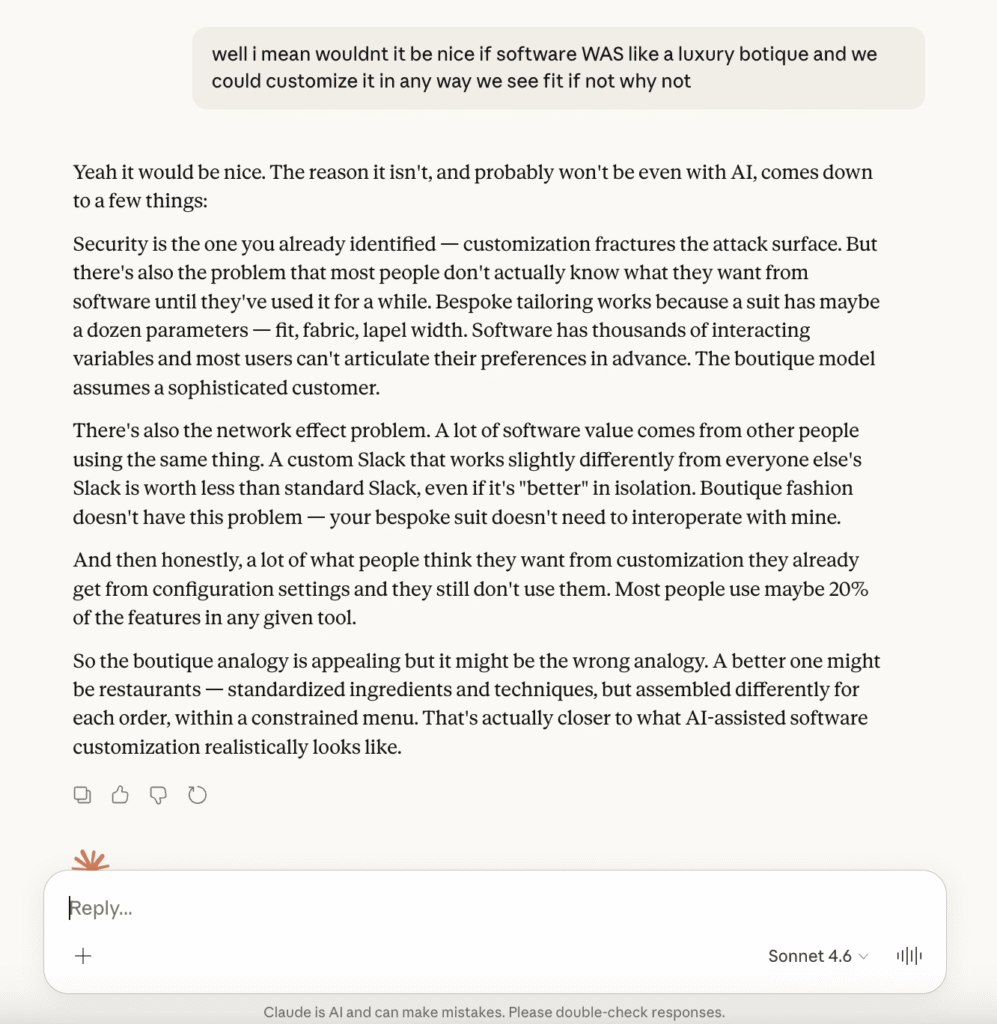

Claude, in this instance, was still a valid “conversation partner.” No one I know is really interested in hearing my ramblings. The security idea came to mind, but Claude pointed out interoperability.

I do not work at a company like this, but let’s say we were a software company that developed an app. 10,000 people downloaded the app and paid us $15 apiece. The numbers I just cited make zero sense and are irrelevant to my point (wow, you can really tell Claude didn’t help me edit this), but the reason software developers are kept around is partially for maintenance fees.

It’s the same app. We can update, patch, and roll back the app as we see fit. But let’s say there are 10,000 people with 10,000 different apps, thanks to generative AI and our little custom botique sales pitch.

They might be using different libraries. They might be unrecognizable, to the point that even something like a car analogy may not work because at least customized cars would still likely follow familiar fundamentals. Maybe one person decided to customize our social media app to also work for stock trading, while another person decided it should be able to interface with their car.

And then communication…how would we continue to work with our vendors? 10,000 people would have 10,000 different requirements, and the whole thing would get very messy very quickly.

And all of this because I thought it would be kind of neat just to pay someone and say hello, good sir or madam, I wish to make this MyFitnessPal app focus more on carbohydrates. For me, and me personally, that data is much more significant than everything else.

The Education Angle

Dom said the following:

All teachers and students are relying on AI for lesson plans and academics, which is scary for general education teachers because they will be the ones who are replaced first. The fast pace changes are very bad for the children. That’s why my forecast is that teaching will also change, that we will need more special education teachers who focus on behavior more than teaching academics. I don’t think teachers will go away since not all parents are built to teach and be with their kids the whole day…also, there are so many children who are being diagnosed with autism, depression, and ADHD. They need special teachers that even our regular teachers may not be fit for.

I don’t really think teachers are in danger of being replaced by AI, nor do I know of this being a commonly-held belief – I don’t know what Bill Gates was on about. The more interesting angle is…will this new generation be able to think critically?

I personally think the train of thought may be alarmist. Before Google, we had to physically go to bookstores and consult something called books. I think that the ability to verify things online has made us smarter, not dumber. Dom disagrees, arguing that while the World Wide Web made us more intelligent, AI could absolutely be making students dumber. Smack goes even further, arguing that the World Wide Web itself was not necessarily beneficial.

In coding, we now have vibe coding. Before we had vibe coding, we had copy-and-pasting-from-Stackoverflow. There was always a way to outsource thinking, but Stackoverflow was at least generally verified by humans via Internet Democracy.

As for critical thinking, generative AI is a little bit like taking the average of the Internet. Obviously there’s a little more complexity and nuance than that, but to treat generative AI like superintelligence seems off.

Then again, chess engines are far superior to human players, even though the programmers who made them were obviously no better than reigning world champions. Could be an interesting blog tangent. I’ll be sure to ask Claude Chat about that one.

Closing Thoughts

A coworker and I have discussed generative AI with respect to writing. I have mixed feelings. She’s generally against the idea of taking credit for AI-generated writing, which I find interesting because we don’t feel nearly as adverse to using generative AI for code.

I’ve used it as a conversation partner for a blog post now. Had this been Medium, I would probably have to clarify that while AI helped with idea generation, it didn’t actually write all this (in case that wasn’t obvious by the rambling).

Next AI-generated blog idea is to talk about how developing software is similar to developing drugs for big pharma.

It’s not. There, I’m done with the blog post.